Last Updated: December 24, 2025 | Review Stance: Independent testing, includes affiliate links

Quick Navigation

TL;DR - LiveBench 2025 Hands-On Review

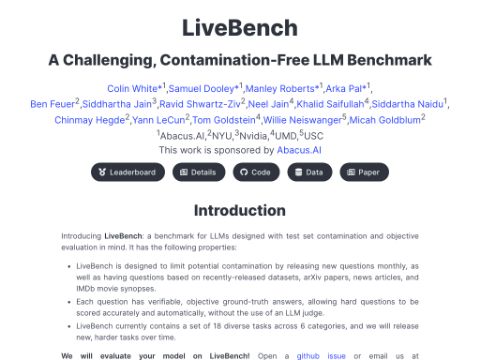

LiveBench stands as the premier contamination-free LLM benchmark in late 2025, featuring regularly updated questions with objective scoring. It challenges top models across reasoning, math, coding, and more—ideal for fair, reproducible evaluations unlike crowd-voted arenas.

LiveBench Review Overview and Methodology

LiveBench is an innovative open-source LLM benchmark designed to combat test set contamination through frequent question updates and objective ground-truth scoring. Launched in 2024 and spotlighted at ICLR 2025, this December 2025 review examines its latest release (LiveBench-2025-12-23), leaderboard performance, and real-world utility for LLM developers.

We analyzed the benchmark's structure, ran sample evaluations, reviewed contamination safeguards, and compared results to other platforms like LMSYS Arena. LiveBench excels at providing challenging, unbiased assessments that reflect true model capabilities.

LiveBench leaderboard overview (2025)

Reasoning & Math

Hard problems from recent competitions and papers.

Coding Tasks

Agentic and standard programming challenges.

Data & Language

Analysis and comprehension with fresh sources.

Contamination-Free

Delayed releases and procedural questions.

Core Features of LiveBench

Key Advantages in LiveBench

- Regular Updates: New questions monthly, full refresh every 6 months.

- Objective Scoring: Verifiable answers, no LLM judges needed.

- Diverse Categories: 7 areas with 21 tasks for comprehensive testing.

- Delayed Release: Recent questions hidden to prevent training leaks.

- Open-source with GitHub repo and Hugging Face data.

How LiveBench Works

- Sources: Recent news, arXiv, competitions, datasets

- Harder versions of BBH, AMPS, IFEval

- Sponsored by Abacus.AI

- Leaderboard auto-updated with new models

LiveBench Leaderboard & Performance

As of December 2025, LiveBench's latest release includes tougher reasoning tasks, with top models scoring around 75% globally—highlighting ongoing challenges.

Top Performers on LiveBench

Claude 4.5 (Anthropic)

Gemini 3 (Google)

Objective Scoring

Contamination-Free

LiveBench Use Cases & Comparisons

Best Scenarios for LiveBench

- Fair model ranking without contamination risks

- Objective testing for research and releases

- Tracking progress on hard, fresh problems

- Comparing to crowd-voted arenas like LMSYS

LiveBench vs LMSYS Arena

Objective Answers

Fresh Questions

No Human/LLM Judges

Open-Source

LiveBench Access, Costs & Value

Benchmark

Free open

Website & Repo

✓ No Cost

View leaderboard

Custom Runs

API Fees vary

Model inference

Pay for Usage

LiveBench is free to access and view; running evaluations requires model API costs.

Value of LiveBench

Highlights

- Fair comparisons

- Objective metrics

- Regular freshness

- Community-driven

Best For

- Researchers

- Model developers

- Benchmark fairness

Pros & Cons: Balanced LiveBench Assessment

Strengths

- Effective contamination prevention

- Fully objective scoring

- Challenging, diverse tasks

- Regular updates keep it relevant

- Open-source and transparent

- Influences real model development

Limitations

- Costs for running large evaluations

- No subjective/human preference testing

- Setup required for custom runs

- Fewer tasks than some arenas

- Delayed access to newest questions

Who Should Use LiveBench?

Perfect For

- LLM researchers needing fair rankings

- Developers tracking objective progress

- Teams avoiding contaminated benchmarks

- Academic and industry comparisons

Consider Alternatives If

- You want human preference voting

- Subjective tasks are priority

- Quick casual comparisons

- Zero-cost instant runs

Final Verdict: 9.5/10

LiveBench sets the standard for trustworthy LLM benchmarking in 2025 with its contamination-free design and objective scoring. Essential for serious evaluation, it outperforms subjective arenas in fairness and longevity.

Challenge: 9.6/10

Relevance: 9.4/10

Value: 9.3/10

Ready for Contamination-Free LLM Benchmarking?

Visit the leaderboard or explore the open-source repo for fair model evaluations.

Free access as of December 2025.