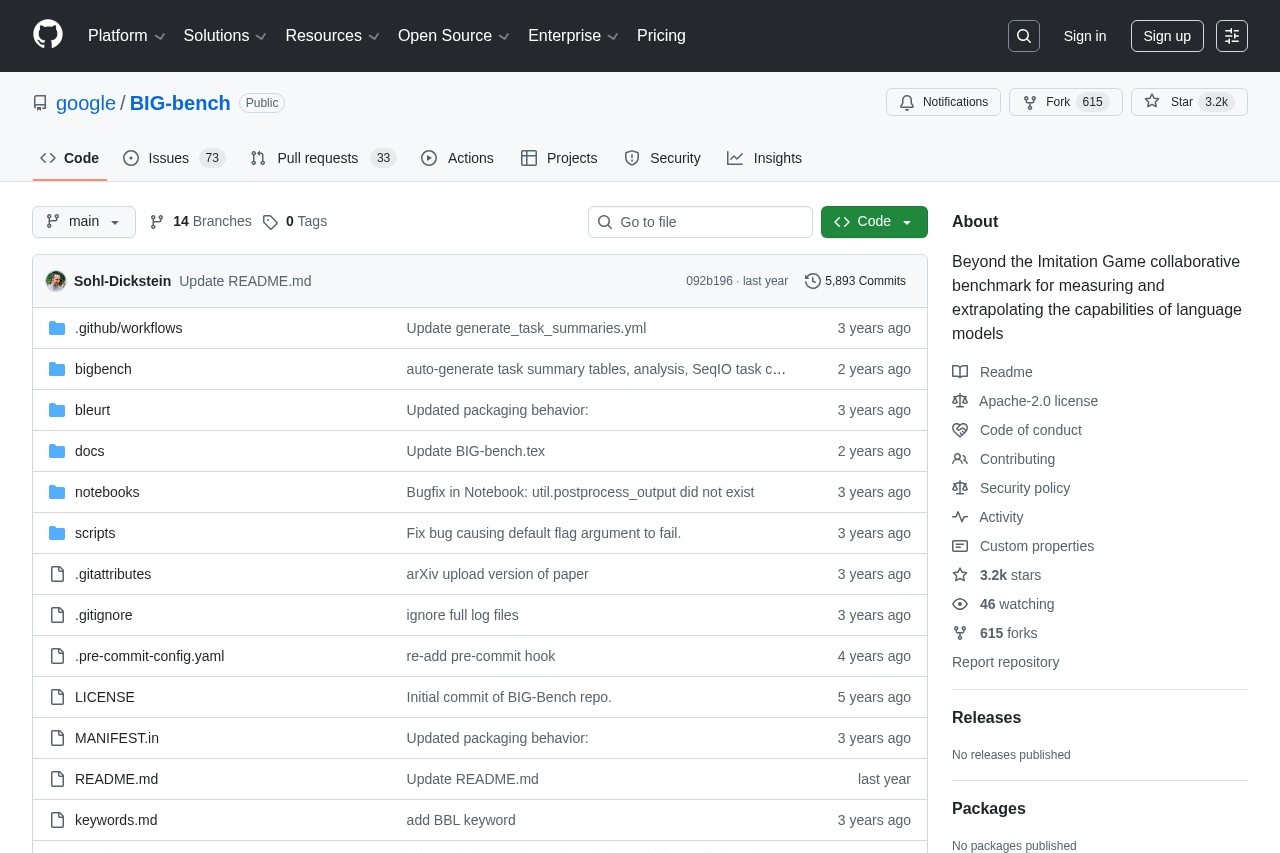

BIG-bench remains a landmark open-source benchmark suite in late 2025, featuring over 200 diverse tasks that probe reasoning, creativity, social understanding, and more. Though many tasks are now solved by frontier models, its breadth makes it ideal for broad capability assessment and historical comparison—completely free, community-driven, and easy to run.

LiveBench stands as the leading contamination-free LLM benchmark in late 2025, using regularly refreshed questions from recent sources and objective ground-truth scoring. It challenges top models like GPT-5.1 and Claude 4.5 across reasoning, math, coding, and more—providing fair, reproducible results trusted for research and development.