Tool Dynamics

Explore Tool Dynamics for insights on tool performance, predictive maintenance strategies, and lifecycle management to maximize efficiency and reduce costs.

- Home

- Tool Dynamics

Zhipu AI Drops GLM-ASR Open Source and Launches Intelligent Input Method: Real-Time Voice-to-Text That Thinks Like a Human

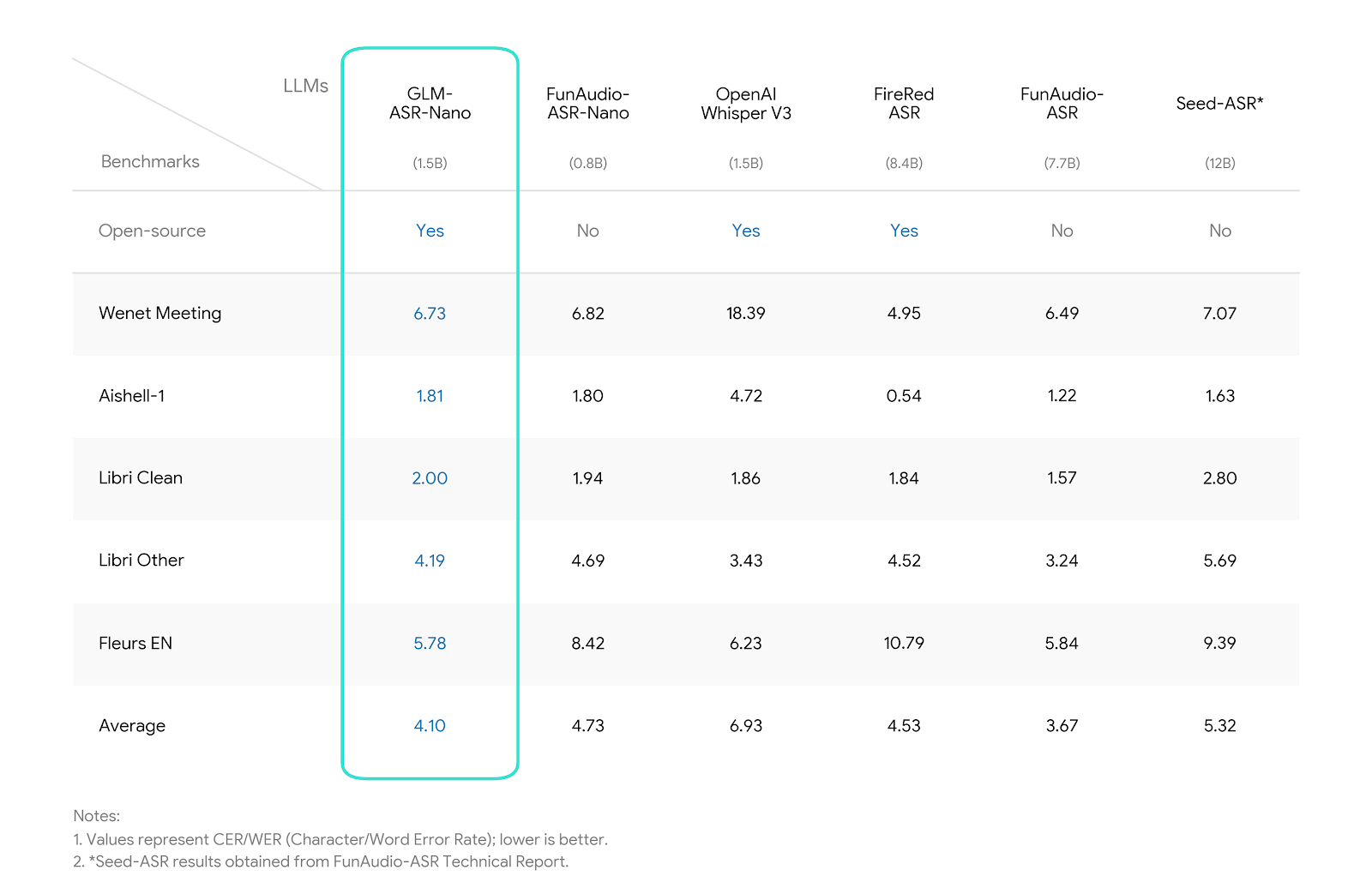

On December 12, 2025, Zhipu AI simultaneously open-sourced GLM-ASR — a bilingual streaming speech recognition model with 96.8% accuracy on Mandarin and 98.2% on English — and launched the GLM Intelligent Input Method app. The input method integrates live ASR, semantic prediction, and GLM-4 reasoning, enabling voice dictation that auto-corrects context, completes sentences, and even rewrites in formal tone. Within hours of release, the app topped China's iOS productivity charts, with GLM-ASR's GitHub repo exploding to 15k stars overnight.

Mistral AI Open-Sources Devstral 2: The Next-Gen Agentic Coding Model That Crushes SWE-Bench and Powers Vibe CLI Autonomy

Mistral AI unleashed Devstral 2 on December 9, 2025 — a groundbreaking open-weight coding model family featuring the flagship 123B-parameter Devstral 2 and the compact 24B Devstral Small 2. With a massive 256K context window, SOTA 72.2% on SWE-Bench Verified, and seamless tool-calling for multi-file edits, it outpaces larger rivals at a fraction of the cost. Paired with the open-source Mistral Vibe CLI — a terminal agent that scans codebases, executes changes, and vibes with your workflow — this duo democratizes enterprise-grade agentic coding, from local laptops to cloud fleets.

Zhipu AI Open-Sources AutoGLM: The Phone Agent Framework That Turns Every Smartphone into a True AI Phone

On December 9, 2025, Zhipu AI (Z.ai) fully open-sourced AutoGLM — the groundbreaking open-source phone agent model and framework capable of autonomously operating Android devices via natural language commands. Powered by the AutoGLM-Phone-9B multimodal model, it interprets screenshots, plans multi-step actions, and executes taps, swipes, and inputs across 50+ popular Chinese apps like WeChat, Taobao, Douyin, and Meituan. Deployable locally or in the cloud via ADB, this release democratizes "AI phone" capabilities, challenging closed ecosystems and enabling developers to build privacy-focused agents on any device.

Ant Group's Lingguang AI Assistant Launches Web Version: 30-Second Natural Language App Generation Now Seamless Across Devices

Ant Group officially rolled out the web version of its multimodal general AI assistant Lingguang on December 9, 2025 — completing its multi-device ecosystem and bringing PC users the full power of "Lingguang Dialogue" and "Lingguang Flash Apps." Retaining the signature "30-second natural language mini-app generation" capability, the browser-based experience syncs data and creations with mobile, focusing on workplace and education productivity. Gray tests show heavy usage in professional scenarios, with over 3.3 million flash apps created in the first two weeks post-mobile launch — signaling Lingguang's explosive shift from chat tool to true productivity powerhouse.

Zhipu AI Launches and Open-Sources GLM-4.6V Series: Native Multimodal Tool Calling Turns Vision into Action — The True Agentic VLM Revolution

On December 8, 2025, Zhipu AI officially released and fully open-sourced the GLM-4.6V series multimodal models, including the high-performance GLM-4.6V (106B total params, 12B active) and the lightweight GLM-4.6V-Flash (9B). Featuring groundbreaking native multimodal function calling — where images serve directly as parameters and results as context — plus a 128K token window for handling 150-page docs or hour-long videos, it achieves SOTA on 30+ benchmarks at comparable scales. API prices slashed 50%, Flash version free for commercial use, weights and code now on GitHub/Hugging Face — igniting a frenzy for visual agents in coding, shopping, and content creation.

Tencent Unleashes Hunyuan 2.0: 406B MoE Powerhouse with Industry-Leading Reasoning Efficiency and 256K Context Mastery

Tencent officially launched Hunyuan 2.0 (Tencent HY 2.0) on December 5, 2025 — featuring dual variants: HY 2.0 Think for deep reasoning and HY 2.0 Instruct for rapid responses. Built on a massive 406B-parameter MoE architecture (32B active), it supports 256K context windows while delivering top-tier inference speed and efficiency. Already live in Yuanbao and ima apps, with Tencent Cloud APIs open, early benchmarks show massive gains in math, science, coding, and long-context tasks — positioning it as a domestic frontrunner against global giants.

Google Opens Public Beta for Gemini 3 Deep Think: The "Olympiad Gold Medal-Level" Reasoning Mode That Turns AI into a True Thinking Partner

On December 4, 2025, Google officially rolled out Gemini 3 Deep Think mode in public beta to Google AI Ultra subscribers — building on the Gemini 3 Pro launch last month, this enhanced reasoning engine leverages parallel hypothesis exploration to achieve unprecedented performance on PhD-level science, math olympiad-caliber logic, and long-horizon planning. Inheriting the gold-medal prowess from its Gemini 2.5 predecessors at IMO and ICPC, Deep Think scores 41% on Humanity’s Last Exam (without tools) and 45.1% on ARC-AGI-2, marking the first time an AI publicly demonstrates "deep deliberation" at scale. Early testers report 4x deeper insights on complex queries, cementing Gemini's frontier lead.

Pollo AI Secures $14 Million Seed Funding: The "AI CapCut" Aggregator Explodes with 6M MAU and Profitability in Record Time

Singapore-based Pollo AI announced a $14 million seed round on December 8, 2025 — led by Gaorong Capital (Gao Cheng) with ZhenFund (True Fund) following — marking its first institutional funding. The all-in-one AI video and image generation platform integrates top models like Kling AI, Runway, Veo, and Stable Diffusion, boasting 20M+ registered users, 6M+ MAU, and $20M+ annualized revenue while already profitable since May. Funds will fuel model aggregation, product upgrades, and global expansion, positioning Pollo as the "video version of Canva" in a crowded creator economy.

LiblibAI Launches Seedream 4.5: ByteDance's Latest AI Image Beast Goes Live — Crushing Consistency and Multi-Reference Limits

LiblibAI, China's powerhouse AI creation platform, rolled out full access to ByteDance's Seedream 4.5 on December 5, 2025 — a massive upgrade boasting unbreakable subject consistency, up to 14 reference image fusion, razor-sharp small text rendering, and cinematic 4K fidelity. Creators can now lock characters across poses/scenes, blend multi-source refs without morphing artifacts, and nail complex posters with flawless typography — all in seconds. Early users report 5x faster professional workflows, positioning Seedream 4.5 as the new king for ads, e-commerce, and storyboarding, outpacing rivals in native editing cohesion.

Xiaohongshu Fully Acquires "Diandian": Integrating AI Life Search Engine to Accelerate Content-to-Commerce Closed Loop

On December 4, 2025, enterprise registry data revealed that Xiaohongshu's core operating entity, Xingyin Information Technology (Shanghai) Co., Ltd., completed a 100% acquisition of Shanghai Shengdong Shizhang Technology Co., Ltd. — the developer behind the strategic AI search product "Diandian". Original shareholder and legal representative Wei Kuang (a longtime Xiaohongshu senior product manager) fully exited. This move formally integrates Diandian — Xiaohongshu's incubated AI life search assistant — into the group, bolstering its AI-driven push in lifestyle scenarios and signaling an aggressive play to capture the "content + search + transaction" entrance amid fierce competition from WeChat and Douyin.

Doubao Unveils Seedream 4.5: The Commercial-Grade Image Creation Model Built for Production Power — Crushing Midjourney in Speed and Realism

ByteDance's Doubao AI platform launched Seedream 4.5 on December 12, 2025 — a hyper-optimized image generation model laser-focused on commercial workflows like advertising, e-commerce visuals, and brand asset pipelines. With enterprise-grade consistency, ultra-fast 3-second generations, built-in style libraries for 50+ industries, and seamless integration with Doubao's Canvas and Jinshu document tools, it delivers production-ready assets at scale. Early enterprise adopters report 8x faster visual iteration and 60% cost reduction vs. Midjourney or Firefly, instantly making Seedream the default choice for China's booming creator economy.

Anthropic Acquires Bun: The JavaScript Powerhouse Fueling Claude Code's $1B Explosion and the Rise of AI-Native Dev Stacks

Anthropic announced its first-ever acquisition on December 2, 2025: Bun, the lightning-fast JavaScript runtime, bundler, and toolkit that's already the backbone of Claude Code. With Bun's 7M+ monthly downloads and 82K GitHub stars, this move supercharges Anthropic's AI coding empire — Claude Code hit $1B ARR just six months post-launch — by embedding high-performance execution directly into agentic workflows. Bun stays open-source and MIT-licensed, but expect turbocharged integrations for Claude Agent SDK and beyond, signaling AI's vertical march into the full SDLC.