Tool Dynamics

Explore Tool Dynamics for insights on tool performance, predictive maintenance strategies, and lifecycle management to maximize efficiency and reduce costs.

- Home

- Tool Dynamics

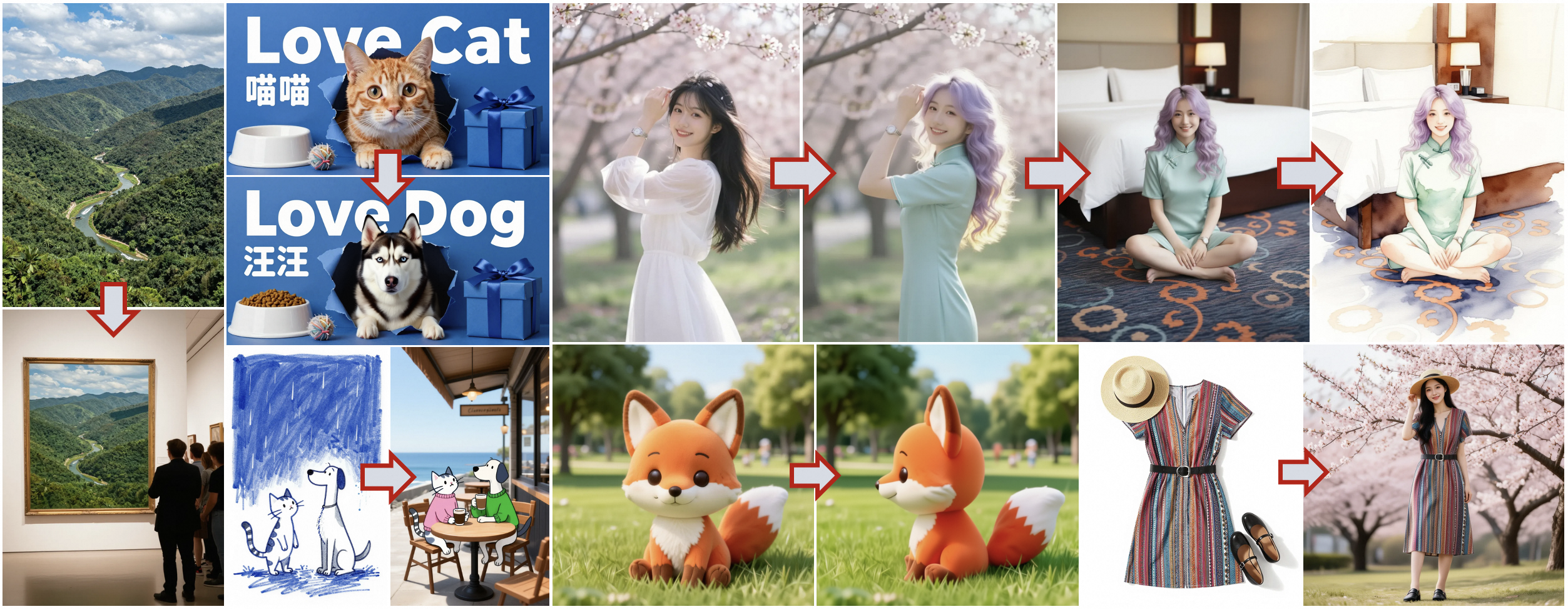

Doubao Unveils Seedream 4.5: The Commercial-Grade Image Creation Model Built for Production Power — Crushing Midjourney in Speed and Realism

ByteDance's Doubao AI platform launched Seedream 4.5 on December 12, 2025 — a hyper-optimized image generation model laser-focused on commercial workflows like advertising, e-commerce visuals, and brand asset pipelines. With enterprise-grade consistency, ultra-fast 3-second generations, built-in style libraries for 50+ industries, and seamless integration with Doubao's Canvas and Jinshu document tools, it delivers production-ready assets at scale. Early enterprise adopters report 8x faster visual iteration and 60% cost reduction vs. Midjourney or Firefly, instantly making Seedream the default choice for China's booming creator economy.

Anthropic Acquires Bun: The JavaScript Powerhouse Fueling Claude Code's $1B Explosion and the Rise of AI-Native Dev Stacks

Anthropic announced its first-ever acquisition on December 2, 2025: Bun, the lightning-fast JavaScript runtime, bundler, and toolkit that's already the backbone of Claude Code. With Bun's 7M+ monthly downloads and 82K GitHub stars, this move supercharges Anthropic's AI coding empire — Claude Code hit $1B ARR just six months post-launch — by embedding high-performance execution directly into agentic workflows. Bun stays open-source and MIT-licensed, but expect turbocharged integrations for Claude Agent SDK and beyond, signaling AI's vertical march into the full SDLC.

LiblibAI Launches Kling O1: The All-in-One Video Model Turning Prompts into Pixar-Grade Worlds — Kicking Off the Multimodal Video Revolution

LiblibAI rolled out Kling O1 on November 30, 2025 — Kwai's (Kuaishou) groundbreaking unified multimodal video model that fuses text, images, and clips into seamless generations via a single input box. Powered by MVL architecture and Chain-of-Thought reasoning, it nails physics, consistency, and creativity, outperforming Veo 3.1 and Runway Alpha on benchmarks like 247% win rate in image-reference tasks. Now live on LiblibAI with a chat-style UI, early creators are churning 10s cinematic shorts in seconds, signaling the death of tool-hopping workflows.

Runway Unveils Gen-4.5 "David": The Underdog AI Video Model Crushing Google Veo 3 and OpenAI Sora 2 with Cinematic Realism

Runway launched Gen-4.5 on December 1, 2025 — its frontier video generation model codenamed "David" (a biblical nod to slaying giants), topping the Video Arena leaderboard with 1,247 Elo points for unmatched motion quality, prompt adherence, and visual fidelity. Built on NVIDIA Hopper/Blackwell GPUs, it crafts high-definition, physics-accurate clips from text prompts, handling complex scenes with object permanence and causal reasoning that outpace rivals. Rolling out now to all users, early creators report 5x faster Hollywood-grade shorts, fueling Runway's $3.55B valuation surge.

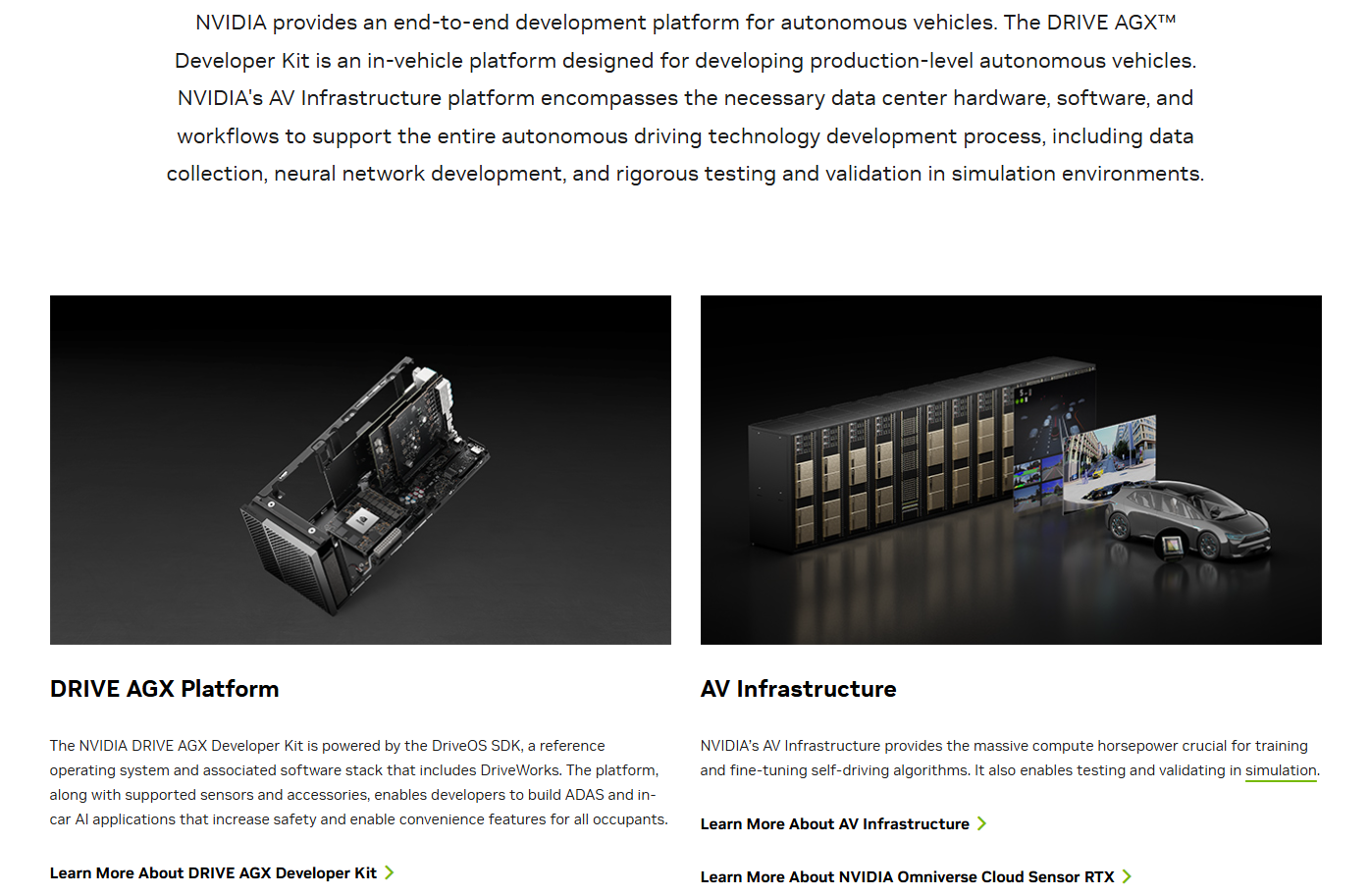

NVIDIA Unleashes Alpamayo-R1: The Reasoning VLA That Gives Autonomous Driving a Brain — Open-Sourced for Level 4 Domination

NVIDIA dropped Alpamayo-R1 on December 3, 2025 — the world's first industry-scale open-source reasoning Vision-Language-Action (VLA) model for AVs, fusing chain-of-thought reasoning with trajectory planning to conquer long-tail edge cases like erratic pedestrians or foggy merges. Trained on a massive 1,727-hour multi-country dataset, it outputs explainable "thoughts" in plain English before steering, slashing decision latency by 40% in sims. Now live on GitHub and Hugging Face with the AlpaSim eval framework, it's a direct shot at Tesla's black-box FSD and Waymo's data moats — early benchmarks show 25% better safety scores in rare scenarios.

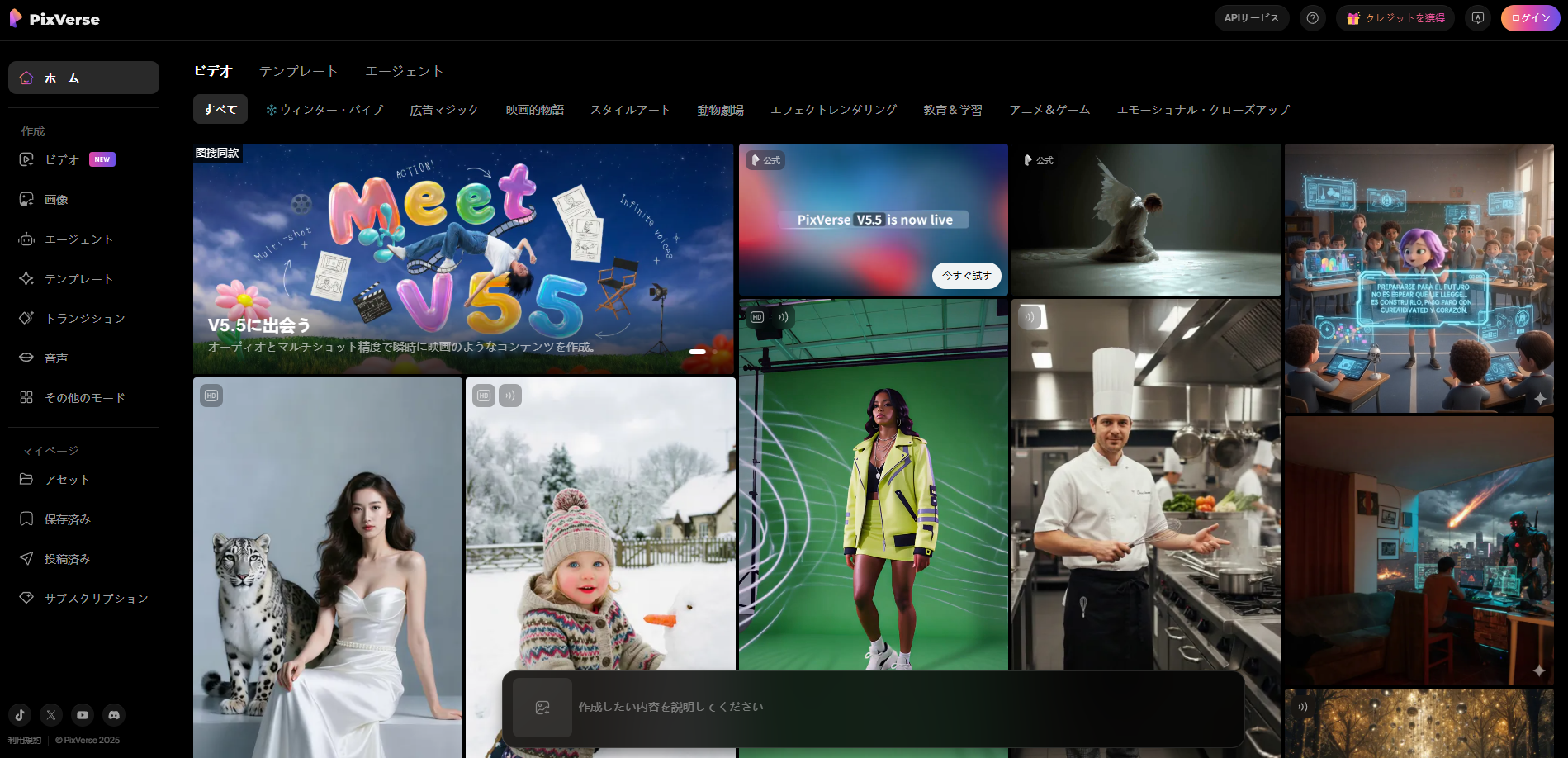

PixVerse V5.5 Drops: Director-Level Audio-Visual Sync in One Click — Turning Prompts into Cinematic Short Films Overnight

AiShi Technology unleashed PixVerse V5.5 on December 4, 2025 — China's first AI video powerhouse with multi-camera narrative generation and seamless audio-visual synchronization. From a single text prompt or image, it auto-scripts multi-shot sequences with lip-synced dialogue, ambient SFX, and BGM, outputting polished 1080p clips in 5-10 seconds. No more stitching clips or manual syncing: this "director-mode" beast has already hooked 100M+ users, with ARR blasting past $40M. Early tests show it outpacing Runway Gen-3 and Kling in narrative flow, making pro-level shorts accessible to anyone with a keyboard.

DeepSeek V3.2 Official Release: Reasoning-First Powerhouse with Integrated Tool-Thinking for Unmatched Agentic AI

DeepSeek AI launched the official DeepSeek-V3.2 on December 1, 2025 — a frontier large language model series optimized for superior reasoning and agent performance, now available via API, web, app, and Hugging Face. Introducing DeepSeek Sparse Attention for long-context efficiency, a scalable RL framework rivaling GPT-5, and a groundbreaking "Thinking in Tool-Use" mode that fuses step-by-step deliberation with external tool calls, V3.2 and its high-compute V3.2-Speciale variant achieve gold-medal IMO/IOI scores while slashing inference costs. This release marks DeepSeek's boldest agentic leap, empowering developers with seamless hybrid workflows.

ByteDance's Vidi2 Surpasses Gemini 3 Pro: The 12B Video LLM Revolutionizing Understanding and Editing with TikTok-Scale Smarts

ByteDance unveiled Vidi2 on December 1, 2025 — a 12-billion-parameter multimodal large language model specialized for video understanding and creation, now open-sourced on GitHub. Crushing benchmarks like VUE-TR-V2 (temporal retrieval) and VUE-STG (spatio-temporal grounding) with scores of 53.19% Temporal IoU — double that of Gemini 3 Pro's 27.50% — Vidi2 processes hours-long footage for precise edits, story-aware cuts, and highlight extractions. Leveraging TikTok's 1B+ daily users for training, it outperforms GPT-5 on long-video QA while running on consumer hardware, marking ByteDance's boldest strike in the video AI arena.

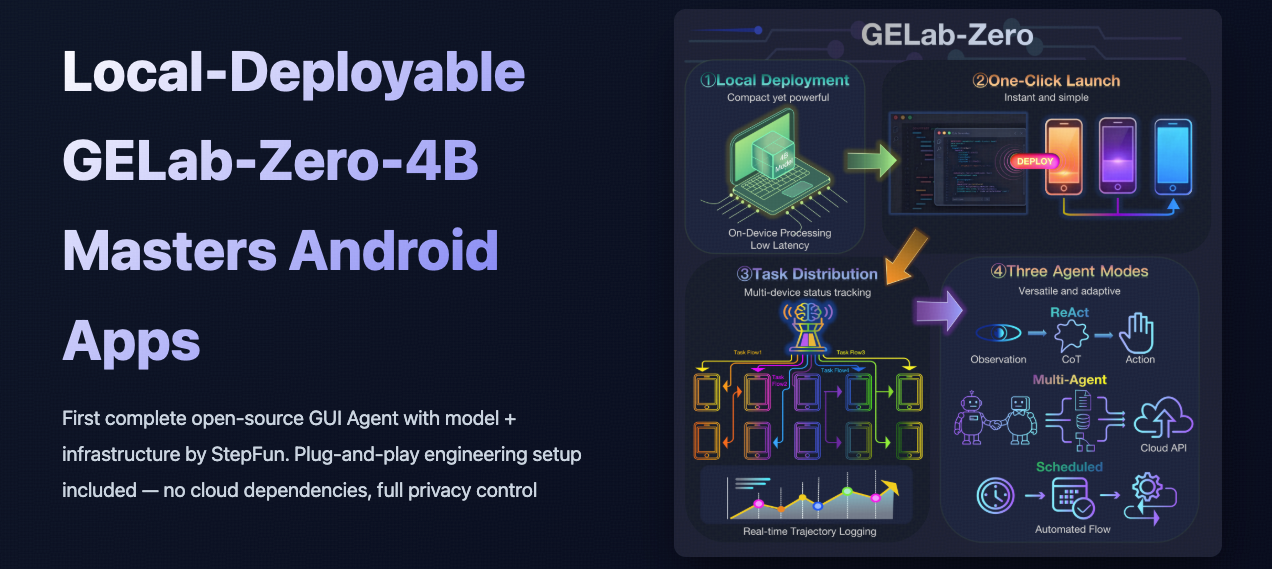

StepFun Open-Sources GELab-Zero 4B: The Lightweight GUI Agent That Conquers All Android Devices with Zero-Shot Automation Magic

StepFun AI launched GELab-Zero on November 28, 2025 — a fully open-source 4B-parameter multimodal GUI agent model designed for autonomous Android control, now available on Hugging Face under Apache 2.0. Featuring plug-and-play infrastructure for ADB handling, task recording, and local inference on consumer hardware (as low as 16GB VRAM), it achieves 75.86% success on AndroidWorld benchmarks for complex tasks like ride-hailing and shopping. This zero-dependency beast runs offline on any Android device, slashing latency while preserving privacy — a direct challenge to cloud-heavy agents like Claude's Computer Use, empowering devs to automate mobile workflows without vendor lock-in.

DeepSeek's Powerhouse Return: Open-Sourcing DeepSeek-Math-V2, the IMO Gold-Medal Math Model Crushing Theorems with Self-Verification

DeepSeek AI roared back on November 27, 2025, with DeepSeek-Math-V2 — a groundbreaking open-source math reasoning model built on DeepSeek-V3.2-Exp-Base, achieving gold-level scores on IMO 2025 and CMO 2024, plus a near-perfect 118/120 on Putnam 2024 via scaled test-time compute. Featuring a dual verifier-generator architecture for self-verifiable proofs, it outpaces Claude 4 and Gemini on IMO-ProofBench, emphasizing rigorous step-by-step logic over mere answers. Weights dropped on Hugging Face under Apache 2.0, democratizing Olympiad-grade AI for researchers and educators worldwide.

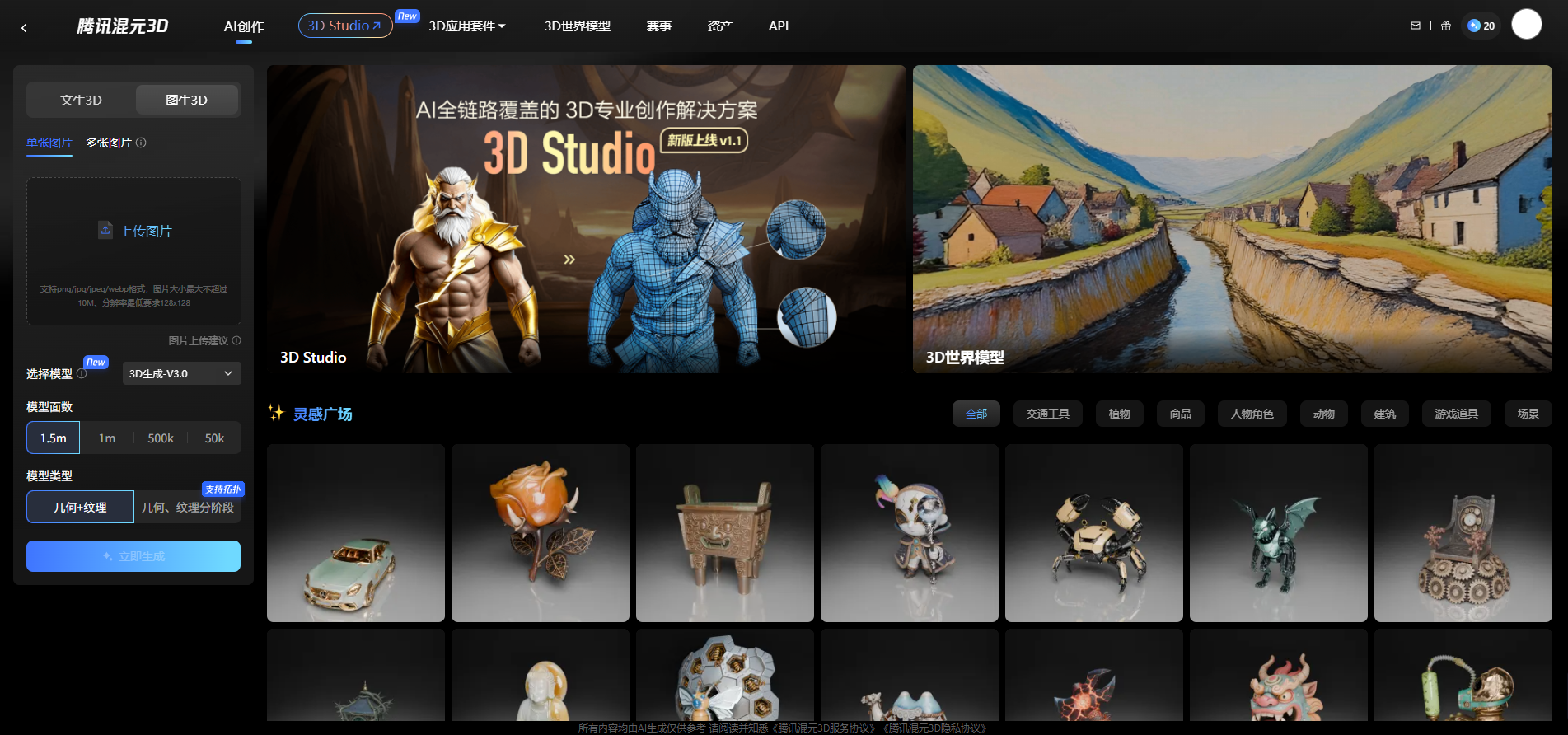

Tencent's Hunyuan 3D Studio Upgrades to 1.1: PolyGen 1.5 Unlocks Artist-Grade 3D Assets Straight from Prompts — No Modeling Marathons Required

Tencent unveiled Hunyuan 3D Studio 1.1 on November 28, 2025 — a seismic upgrade integrating the cutting-edge PolyGen 1.5 model for end-to-end quad-mesh generation, delivering production-ready 3D assets in minutes. Pioneering native quad topology learning with seamless edge loops and hybrid surfacing, it slashes wiring complexity by 70% for soft/hard models, outpacing traditional tools like Blender in efficiency and fidelity. Free for core use via the web platform, with pro API access on Tencent Cloud, this launch catapults creators from concepts to game-ready props, signaling Tencent's dominance in AI-driven 3D pipelines.

Alibaba Open-Sources Z-Image: The 6B-Parameter Efficiency Beast That Matches 20B+ Closed-Source Titans in Photorealism and Speed

Alibaba's Tongyi Lab dropped Z-Image on November 26, 2025 — a revolutionary 6B-parameter open-source text-to-image model that's now live on Hugging Face under Apache 2.0. Featuring Single-Stream Diffusion Transformer (S3-DiT) architecture, it generates stunning 1024x1024 images in just 8 steps with sub-second latency on consumer GPUs (16GB VRAM), supporting bilingual text rendering and complex prompts. Outpacing Midjourney V7 and DALL-E 3 in human preference Elo scores (1,038 on AI Arena), Z-Image-Turbo variant slashes costs by 3x while delivering SOTA visual quality — a lightweight lightning bolt for devs, creators, and edge AI.