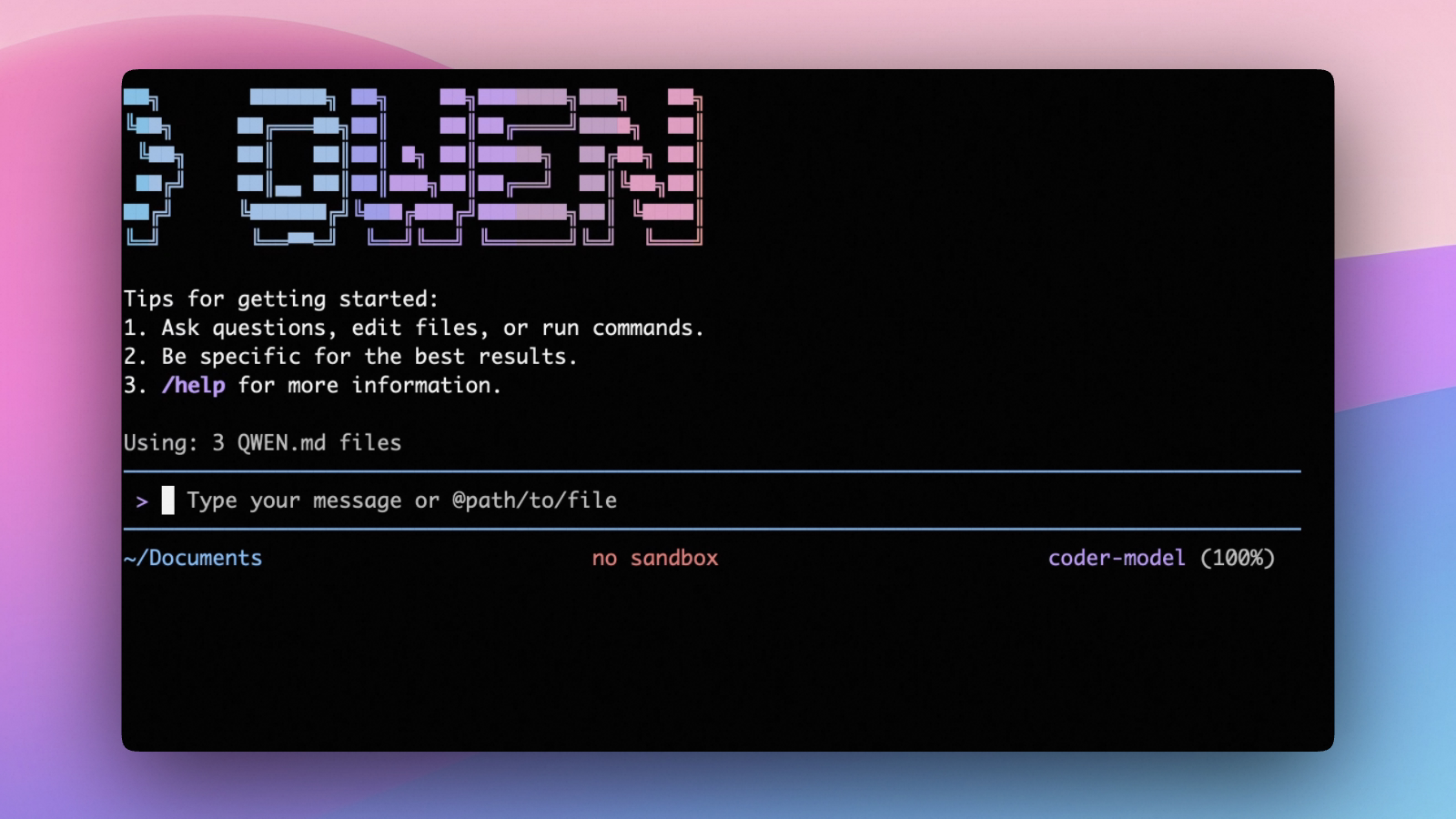

Alibaba Open-Sources Qwen3‑Coder‑Next — “Small but Mighty” Coding‑Agent MoE With Only 3B Active Params and 256K Context

Alibaba’s Qwen team has released Qwen3‑Coder‑Next as an open‑weight coding model aimed at agentic coding and local development. Despite having 80B total parameters, it activates only ~3B parameters per token (sparse MoE), and claims performance comparable to models with 10–20× more active parameters—a “small‑but‑strong” story in real deployment cost. The model ships with 262,144 (≈256K) native context, is designed for tool use and long‑horizon coding loops, and is explicitly positioned to integrate with popular CLI/IDE scaffolds (e.g., Claude Code, Qwen Code, Cline, etc.)

Mistral Large 3 Enters Public Beta — French AI Giant Challenges GPT-4o and Claude 3.5 with New Agentic Capabilities and Unmatched Efficiency

Mistral AI has launched the public beta for Mistral Large 3, its next-generation flagship model, directly challenging the dominance of OpenAI's GPT-4o and Anthropic's Claude 3.5. Featuring a new "Tool Use v2" engine for advanced agentic tasks, a 256K token context window, and top-tier benchmark performance, Mistral Large 3 is positioned as the most powerful and cost-efficient European alternative for enterprise-grade AI applications, available now via La Plateforme and Microsoft Azure.