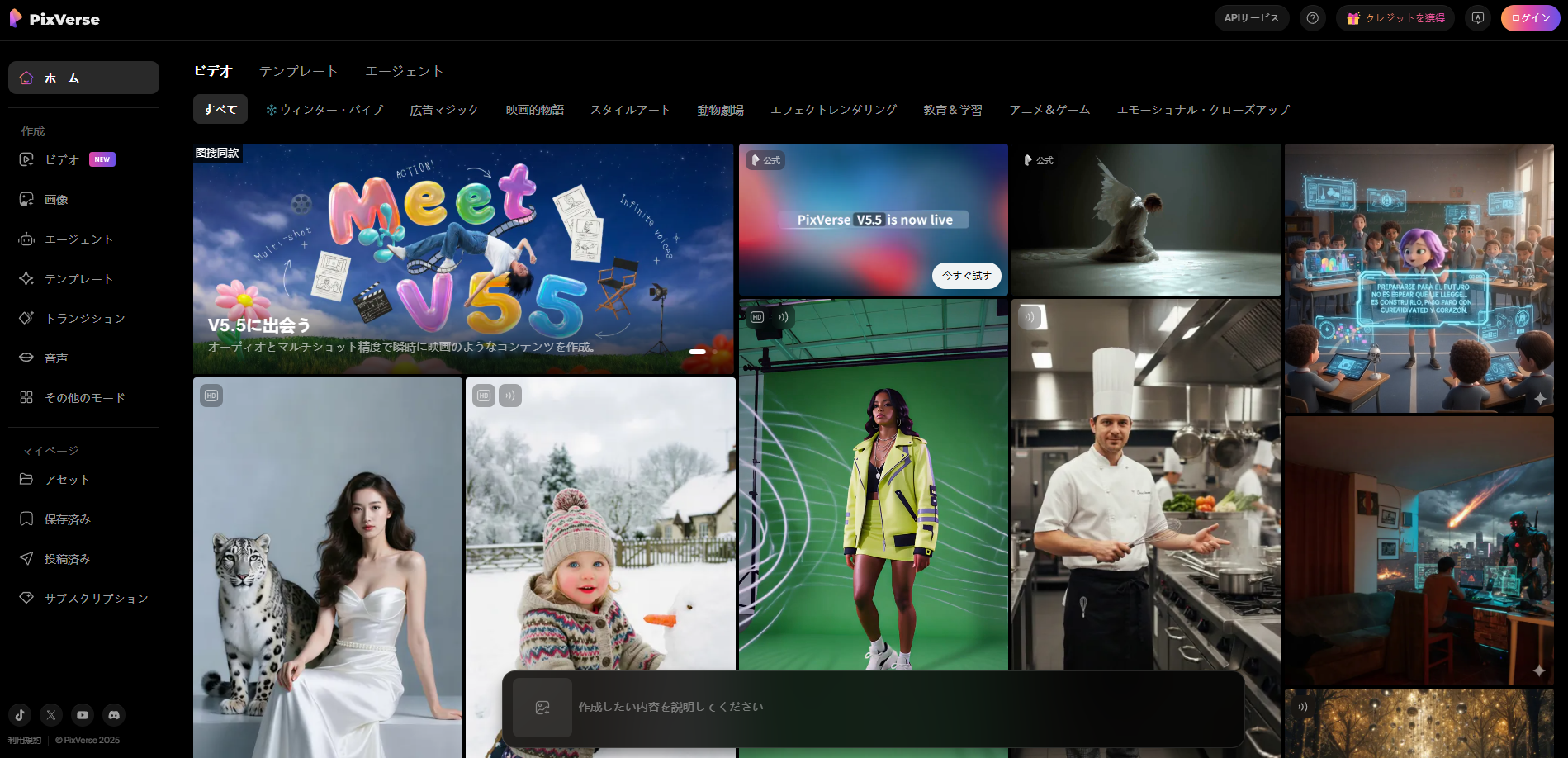

PixVerse V5.5 Drops: Director-Level Audio-Visual Sync in One Click — Turning Prompts into Cinematic Short Films Overnight

Category: Tool Dynamics

Excerpt:

AiShi Technology unleashed PixVerse V5.5 on December 4, 2025 — China's first AI video powerhouse with multi-camera narrative generation and seamless audio-visual synchronization. From a single text prompt or image, it auto-scripts multi-shot sequences with lip-synced dialogue, ambient SFX, and BGM, outputting polished 1080p clips in 5-10 seconds. No more stitching clips or manual syncing: this "director-mode" beast has already hooked 100M+ users, with ARR blasting past $40M. Early tests show it outpacing Runway Gen-3 and Kling in narrative flow, making pro-level shorts accessible to anyone with a keyboard.

🎬 PixVerse V5.5: Chinese AI Video Maestro Buries Clunky Clips Under Cinematic Gold

The era of clunky AI clips — awkward pans, mismatched mouths, and silent stares — just got buried under a landslide of cinematic gold.

PixVerse V5.5, the latest salvo from Chinese AI video trailblazer AiShi Technology, isn't tweaking knobs; it's rewriting the script for short-form storytelling. Fresh off a $14M B+ funding round that rocketed ARR to $40M+, this upgrade catapults from single-scene gimmicks to full-blown director's cuts: multi-shot montages with physics-real motion, auto-camera choreography, and audio that hugs every frame like a pro sound designer. Built on a hybrid Diffusion-Transformer core with upgraded MVL architecture, V5.5 processes prompts in seconds, spitting out 5-10s HD narratives that feel ripped from a festival reel — all while keeping compute costs 30% leaner than global rivals.

🎛️ The One-Click Director's Chair

Ditch the editing bay: V5.5's magic boils down to a prompt like "a detective interrogates a suspect in a rainy noir office" → boom, an 8s clip with:

Intelligent Multi-Shot Flow

Auto-switches from wide establishing shots to tense close-ups, push-ins, and over-the-shoulders, with seamless transitions that nail rhythm and pacing. No more static stares — it thinks like a DP, layering depth and energy.

Seamless Audio Alchemy

Generates dialogue, lip-synced to perfection (95% accuracy on mouth shapes), plus ambient rain patters, tense BGM swells, and SFX that sync pixel-for-pixel with actions. Upload your voice or let it TTS in 119 languages.

Narrative Brain

LLM-powered script breakdown in 5s — parses intent, builds scene graphs, and ensures continuity across shots, turning vague ideas into coherent micro-stories.

Input Freedom

Text, image refs, or multimodal mashups; extend clips to 20s+ with continuity holds, all exportable to Unity or social feeds.

🎨 Interface That's a Creator's Fever Dream

Fire up the SeaArt or Dzine dashboard (integrated playgrounds), and it's prompt → canvas → magic: a live preview blooms with wireframe shots solidifying into explorable timelines.

@PixVerse mid-gen to remix:

@add chase sequence with thunder SFX@lip-sync to my audio upload

Outputs land as glTF-ready assets or watermarked shorts, with semantic versioning to rollback "that offbeat cut." Pro tip: SVIP unlocks unlimited gens and private VPC for enterprise — no more queue purgatory.

📈 Launch Metrics: A Creative Tsunami

User Explosion

100M+ global creators onboard since 2024, with V5.5 spiking daily actives 3x in week one — devs churning TikTok virals 5x faster, marketers ditching stock footage.

Benchmark Beatdown

| Benchmark | Statistic |

|---|---|

| SpatialBench Motion Realism | 13.5/15 (top rank) |

| LiveCodeBench Video Coherence | 89% |

| Lip-Sync Fidelity vs. Kling 1.5 | Edges ahead |

| Latency | Sub-10s |

Real-World Rampage

Indie filmmakers gen "enchanted forest quest" intros in minutes; educators drop synced explainer vids; brands auto-craft ad reels with custom voiceovers. Internal betas slashed production from 2 hours to 7 minutes per short.

⚠️ The Fine Print: Not Quite Hollywood Yet

Beta guardrails are tight:

- Clips cap at 10s (extensions fuzzy post-15s)

- Complex plots risk minor glitches in long-range logic

- Ethical nets watermark gens while auditing biases in voice tones

AiShi's red-teaming doubled down on MVL for cultural nuance, but pros might still layer in DaVinci for final polish. Open-source teases for the audio engine could spark a dev frenzy.

🌊 Industry Shockwaves

This isn't just a tool drop — it's a gut punch to the $10B video gen market. While Runway Gen-4 dreams of Hollywood and Sora teases eternity, V5.5 democratizes "complete narrative units" for the masses, flooding platforms with AI-forged shorts that blur real vs. rendered. Roblox creators? Metaverse builders? Social empires? They're all about to drown in user-gen epics, with AiShi eyeing global export via API hooks.

PixVerse V5.5 isn't evolving AI video — it's democratizing the director's guild, handing one-click symphonies of sight and sound to bedroom auteurs and boardroom hustlers alike. As multi-shot mastery and sync sorcery go mainstream, the barrier between brainwave and blockbuster evaporates: no crews, no crashes, just pure narrative nitro. AiShi's manifesto? Video creation isn't elite craft anymore — it's the new literacy, and V5.5 just issued the universal decoder ring.

Official Links

Generate with PixVerse V5.5 → https://www.pixverse.ai