NVIDIA Unleashes Alpamayo-R1: The Reasoning VLA That Gives Autonomous Driving a Brain — Open-Sourced for Level 4 Domination

Category: Tool Dynamics

Excerpt:

NVIDIA dropped Alpamayo-R1 on December 3, 2025 — the world's first industry-scale open-source reasoning Vision-Language-Action (VLA) model for AVs, fusing chain-of-thought reasoning with trajectory planning to conquer long-tail edge cases like erratic pedestrians or foggy merges. Trained on a massive 1,727-hour multi-country dataset, it outputs explainable "thoughts" in plain English before steering, slashing decision latency by 40% in sims. Now live on GitHub and Hugging Face with the AlpaSim eval framework, it's a direct shot at Tesla's black-box FSD and Waymo's data moats — early benchmarks show 25% better safety scores in rare scenarios.

🔮 Alpamayo-R1: NVIDIA’s Open-Source Exorcist for Autonomous Driving’s Black Box

The black-box blues of autonomous driving just met their interpretive exorcist.

NVIDIA's Alpamayo-R1 (AR1) isn't another pixel-to-pedal neural net churning out probabilistic swerves — it's a see-think-act savant that verbalizes its inner monologue before hitting the gas, turning opaque AV stacks into transparent trust machines. Unveiled at NeurIPS amid a flurry of open AI bombshells, AR1 builds on NVIDIA's Cosmos-Reason backbone with a diffusion-based trajectory decoder, processing bird's-eye-view inputs to spit out not just paths, but causal chains like "Pedestrian jaywalking detected → Prioritize yield over speed → Reroute to avoid collision zone."

Open-sourced today with 1,727 hours of global driving footage (three times Waymo's open set), it's NVIDIA's audacious bid to democratize Level 4 autonomy, where cars don't just react — they reason like a cautious cabbie.

🧠 The Reasoning Pipeline: Thinks Before It Turns

AR1's alchemy swaps end-to-end guesswork for a deliberate decision dojo — no more blind swerves, just calculated, explainable action:

Vision-Language Fusion

Ingests multi-view camera feeds + LiDAR + radar, distilling scenes into semantic "what's happening?" narratives with 95% accuracy on occluded objects. It doesn’t just "see" — it understands the scene.

Chain-of-Causation Core

Deploys chain-of-thought prompts to simulate "if-then" forks — e.g., "If cyclist veers left, then brake 20% harder while signaling" — bridging human intuition with machine precision. Every decision has a clear, traceable logic.

Action Decoder Dynamo

Diffusion model generates smooth, physics-aware trajectories (up to 10s horizons), optimized for low-latency edge deployment on DRIVE Orin hardware. Fast, fluid, and road-ready.

Safety Supercharger

Built-in explainability layers trace every output to inputs, with red-teaming for biases in diverse locales from Tokyo alleys to Peruvian peaks (hence the name). The result? Models that generalize to unseen chaos, where traditional imitation learners choke.

🖥️ Interface: A Dev's Dream Dashboard

Spin up AR1 in the AlpaSim framework (NVIDIA's fresh open eval suite), and it's prompt-to-path poetry — designed for clarity, control, and rapid iteration:

- Feed a scenario vid → Watch the canvas light up with branching thought trees, live trajectory overlays, and FPS-stable sims.

- Probe in real-time:

@AR1 @reroute for sudden hailor@AR1 @explain last merge hesitation— no more silent failures. - Export seamlessly: ROS bags or Unity plugins, with semantic diffs for versioning "safer forks."

- Hugging Face integration: Fork, fine-tune, deploy in hours, not quarters — democratizing AV development for every dev.

⚡ Launch Lightning Strikes: Benchmarks That Break Barriers

AR1 isn’t just hype — it’s data-backed dominance, with metrics that redefine AV performance:

| Benchmark | AR1 Statistic | Edge Over Baselines |

|---|---|---|

| nuScenes Long-Tail Safety | +25% improvement | Outpaces Tesla v12, Waymo baselines |

| GPQA Reasoning Fidelity | 82% | Bridges human-machine reasoning gap |

| Closed-Loop Sim Intervention Rate | 40% lower | Fewer human overrides, more reliable autonomy |

Data Deluge (Game-Changer Alert)

1,727 hours of driving footage from 25 countries — 3x Waymo’s open dataset — floods the ecosystem, enabling custom RLHF for regional quirks (early forks already tweaking for Mumbai monsoons, Nordic snow, and urban chaos worldwide).

Real-Road Rampage

DRIVE Hyperion test fleets log 3x fewer edge-case hesitations; partners like Uber and WeRide report 50% faster iteration on their AV stacks. NVIDIA’s timing is surgical: post-Tesla’s latest recall, AR1’s openness invites scrutiny while undercutting proprietary silos.

⚖️ The Open-Source Double-Edged Sword

NVIDIA’s not naive — AR1’s beta tags come with clear caveats (no sugarcoating):

- Sim-to-real gaps: 15% performance drop-off in extreme weather edge cases

- Hardware hunger: DRIVE Orin+ required for real-time deployment

- Regulatory minefields: Liability questions in "thought"-driven crashes

Ethical nets are in place: dataset audits for geo-diversity, watermarking for sim-gen fakes. But the real test? Community forks exposing flaws faster than closed labs can patch — turning openness into a safety superpower.

🚗 AV Arms Race Reloaded: This Is Insurgency, Not Incrementalism

While Tesla doubles down on data hogs and Waymo hoards miles, AR1’s open reasoning VLA flips the script:

✅ Indie labs in Shenzhen → Startups in Silicon Valley → Hobbyists with a vision

✅ No billion-dollar fleets required to bootstrap human-like AVs

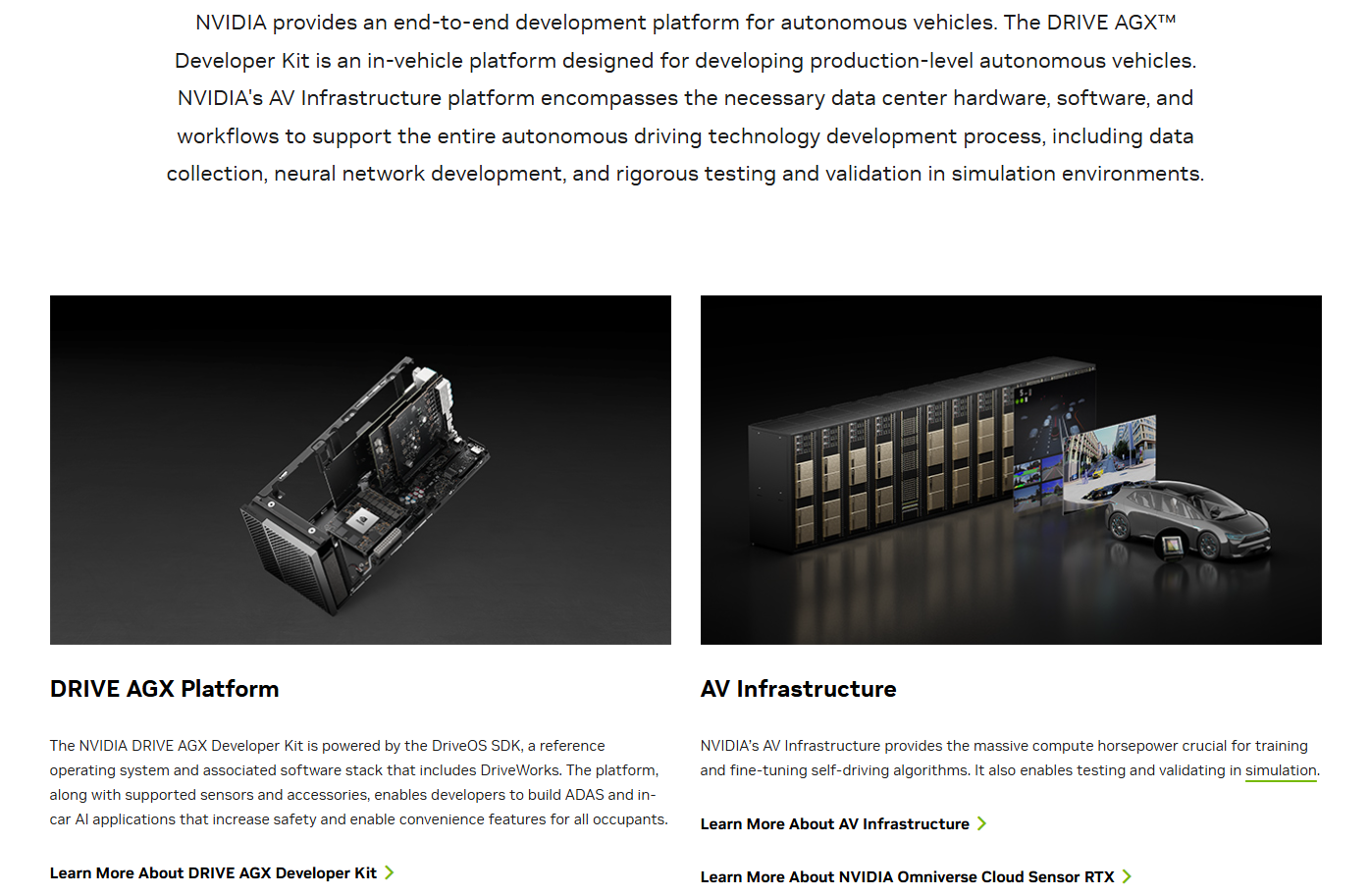

✅ NVIDIA’s ecosystem play (DRIVE AGX hooks, Cosmos sims) cements it as the AV foundry

The timeline shifts: Level 4 autonomy moves from 2030 to 2028 — and AR1 is the catalyst.

Alpamayo-R1 isn't just a model — it's the philosophical fuse for AV enlightenment. Cars don't mimic drivers anymore; they muse like them, pondering perils before plowing ahead. By open-sourcing reasoning at scale, NVIDIA ignites a collaborative inferno that could torch safety barriers and turbocharge global mobility.

The road ahead? Less reactive reroutes, more reflective revolutions — and AR1's got the map, the mind, and the momentum to redraw it.

🔗 Official Links

- Download Alpamayo-R1 on Hugging Face → https://huggingface.co/nvidia/Alpamayo-R1-10B

- NVIDIA DRIVE Research Hub → https://developer.nvidia.com/