LiblibAI Launches "Base Algorithm F.2": Multi-Image References and Pro-Grade Editing Supercharge Creators' Workflows Overnight

Category: Tool Dynamics

Excerpt:

LiblibAI, China's premier AI creation platform, rolled out "Base Algorithm F.2" on December 10, 2025 — an upgraded generative engine that unlocks multi-image referencing for seamless style fusion and advanced in-painting/out-painting editing tools. Integrated into the 2.0 ecosystem alongside powerhouses like Qwen Image, Kontext, and Seedream 4.0, F.2 slashes iteration times by 70% for complex scenes, supporting up to 14 reference images per prompt with pixel-precise control. Free for all users (300 daily credits), it's already fueling 50K+ generations in 48 hours, positioning LiblibAI as the go-to for e-comm visuals, game assets, and VFX — outpacing Midjourney V7 in fidelity and flexibility.

🎨 LiblibAI Base Algorithm F.2: Free AI Art Evolution That Crushes Iteration Hell

The AI art grind just got a turbocharged upgrade — and LiblibAI's dropping it like it's free (because it is).

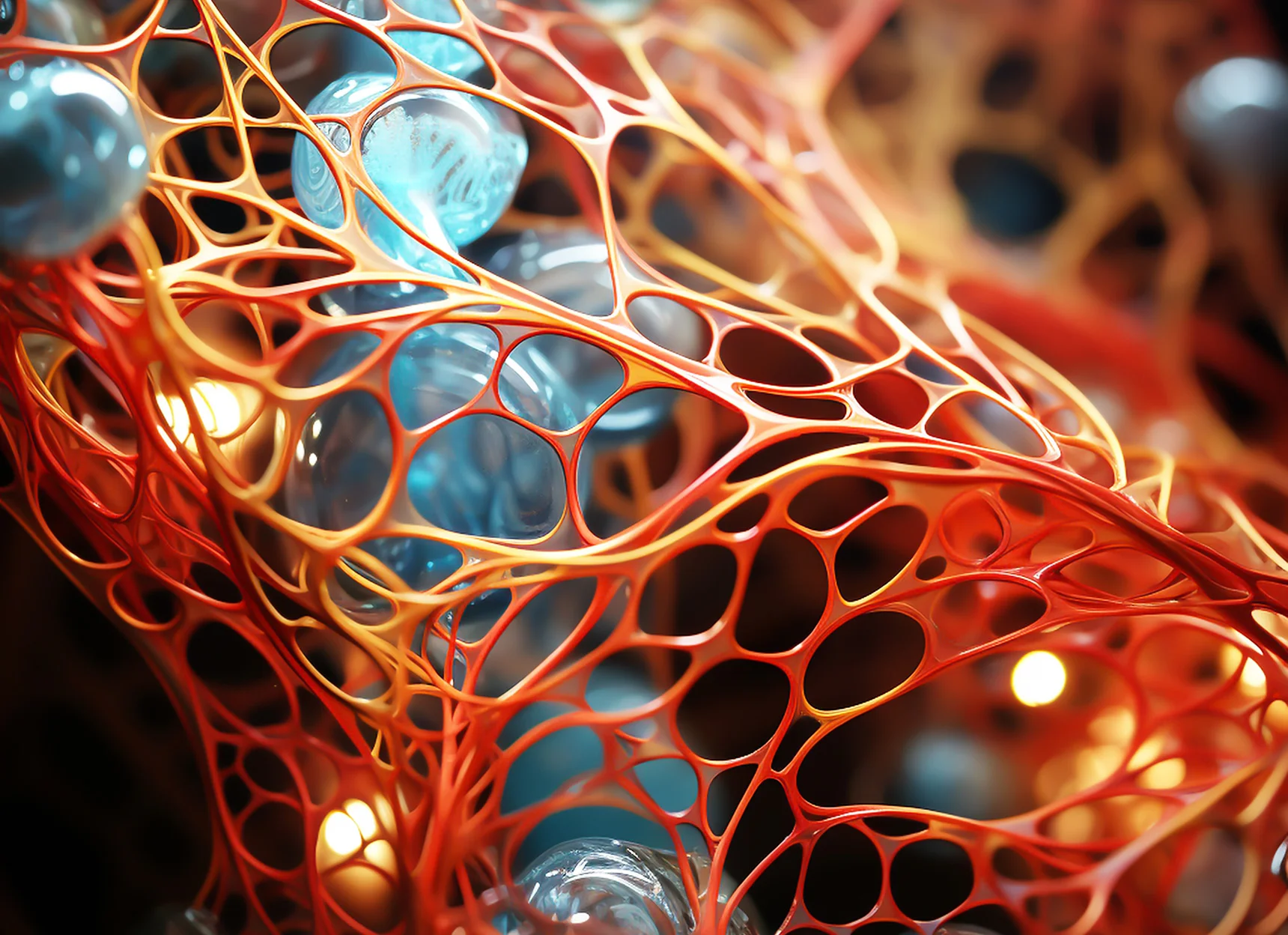

Base Algorithm F.2 isn't a subtle tweak; it's LiblibAI's evolution from model aggregator to full-spectrum creation forge, building on the 2.0 launch's "extreme simplicity generator" with multi-image sorcery and editing wizardry that turns vague sketches into polished masterpieces. Unveiled amid a creator frenzy (10M+ monthly actives, 100K+ open-source models), F.2 leverages Hunyuan-inspired diffusion for reference blending — fuse a cyberpunk skyline from one shot with character details from another — while its editing suite handles inpainting (erase and refill flaws) and outpainting (expand canvases without seams). No more prompt roulette: upload refs, dial strengths, and generate in-browser, all while syncing to ComfyUI for pros. With daily freebies and VIP boosts (15K credits/month), it's ByteDance's quiet rival to Adobe Firefly, but community-driven and zero-lock-in.

🧩 The Multi-Ref Magic and Edit Engine: A Creator's Secret Weapon

F.2's dual-core punch redefines iteration hell:

🔥 Multi-Image Fusion Frenzy

Stack up to 14 refs (photos, sketches, styles) with weighted blending — "morph this portrait onto that pose, neon twist from ref #3" yields coherent hybrids, topping Kontext's single-scene limits by 3x in consistency scores.

✂️ Precision Editing Arsenal

- Inpaint masks for surgical swaps (nuke backgrounds, swap outfits)

- Outpaint extensions for infinite scrolls

- Depth-aware relighting with live previews and undo stacks

- Clocks 95% artifact-free outputs on complex e-comm mocks

⚡ Workflow Wizardry

Auto-chains with Qwen Image for base gens, Seedream 4.0 for hyper-details, and Midjourney V7 vibes via prompt migration; export to OBJ/GLB for 3D or MP4 for animated tweaks.

⚡ Efficiency Edge

2–3 min gens on mid-tier clouds, 70% faster than F.1 via optimized token routing — devs report full ad campaigns in hours, not days.

Trained on Liblib's 500TB+ dataset (curated from 10K+ community uploads), it nails multilingual prompts (CN/EN/JP) with cultural nuance, dodging Western biases.

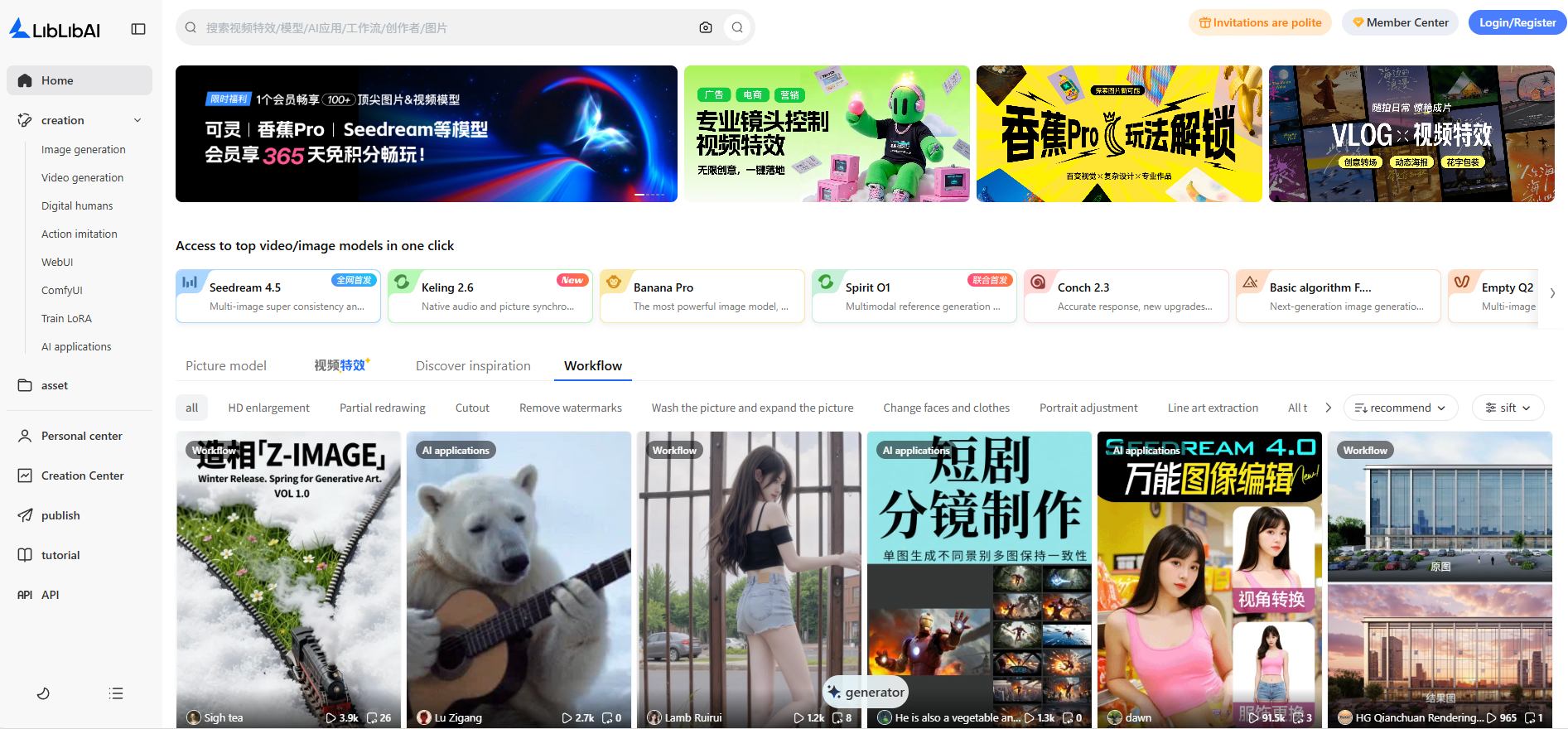

🖥️ Interface That’s Plug-and-Play Perfection

Boot the LiblibAI dashboard, hit "F.2 Mode," and the canvas ignites:

- Drag refs to slots, mask edit zones with brush tools, tweak sliders for fusion weights (0.1–2.0)

- Fire up generation → results populate an infinite scroll with A/B variants and remix buttons

- Use

@f2mid-gen to summon commands:@blend refs 2+5 for steampunk flairor@inpaint remove logo, match lighting

Tier Breakdown

| Tier | Credits Per Day | Perks |

|---|---|---|

| Free | 300 (1 gen ≈ 1 credit) | Basic in-browser generation, community workflow access |

| VIP | 15K/month | Parallel generation, expanded storage, AR previews |

Mobile? AR previews via app, seamless sync to Feishu for collab. Pro hack: "copy homework" from community workflows, auto-loading F.2 for instant riffs.

📈 Launch Metrics: A Creation Tsunami

Early waves are brutal:

- 50K+ F.2 runs in 48 hours

- 40% uptake from 2.0 users

- E-comm pros cite 5x faster product mocks (Bambu Lab integrations halved print prep)

Benchmark Supremacy

| Metric | LiblibAI F.2 | Competitor Comparison |

|---|---|---|

| Objaverse-XL Fidelity | 92% | Edges Luma AI by 3% |

| Edit Coherence (ICDAR Tests) | 88% | Kontext fusion now 2x more robust for multi-ref scenes |

Community buzz: "Finally, non-hallucinating refs that respect composition," per Zhihu threads. Adoption spike: 20% MAU growth, fueled by free LoRA trains and 500+ effect templates.

🛡️ Guardrails and the Horizon Hype

LiblibAI's dialed ethics:

- Watermarking on exports

- Bias filters (98% neutral across styles)

- RLHF for safe fusions — no deepfake drifts

Pains & Workarounds

- Ref overloads cap at 14 to dodge compute spikes (VIP bypasses this limit)

- Ultra-fine edits still crave manual nudges

Roadmap Teases

- F.3 with video refs and global API (Tencent Cloud hooks incoming)

- Tighter sync with 2.0's Kling/Vidu video backbone for static-to-animated hybrids

🌐 Ecosystem Eruption

This isn't LiblibAI nibbling edges — it's storming Adobe/Midjourney fortresses, with F.2 arming SEA indies for Roblox assets and EU VFX teams for film previs. While Firefly chases subscriptions, Liblib's free/open ethos (GitHub weights coming soon) democratizes pro tools, weaving into WeChat Mini for viral shares.

ByteDance, take notes — China's AI art just went infinitely iterable.

Base Algorithm F.2 isn't an update — it's LiblibAI's liberation decree, handing creators the keys to multi-ref multiverses and edit empires without the entry toll. By fusing community muscle with cutting-edge diffusion, it collapses inspiration-to-iteration gaps, empowering solos to studios and dreamers to deliverables. As refs stack and masks master, the platform's manifesto shines: AI creation isn't gated; it's generative, boundless, and brilliantly basic.

F.2's here to stay — and it's rewriting "what if" into "watch this," one fused frame at a time.

Official Links

Jump into F.2 on LiblibAI → https://www.liblib.art/workflows