Tool Dynamics

Explore Tool Dynamics for insights on tool performance, predictive maintenance strategies, and lifecycle management to maximize efficiency and reduce costs.

- Home

- Tool Dynamics

Al Jazeera Unveils "The Core": A Groundbreaking AI Platform Built on Google Cloud That Turns AI into an Active Partner in Journalism

On December 21, 2025, Al Jazeera Media Network announced the launch of "The Core" — a transformative AI-integrated news platform developed in deep collaboration with Google Cloud. Powered by Gemini Enterprise, Vertex AI Search, and advanced agentic capabilities, this six-pillar system shifts AI from a passive tool to a proactive collaborator, empowering journalists with real-time data processing, immersive content creation, and automated workflows. The initiative marks a bold leap for the media industry, redefining how global news is produced and consumed in the AI era.

Google Expands Live Translate Beyond Pixel Buds: Any Headphones Now Become Instant Real-Time Interpreters

Google announced on December 12, 2025, a major upgrade to Google Translate, powered by Gemini's latest native speech-to-speech capabilities. The standout feature: live real-time audio translation now works with any connected headphones or earbuds on Android — no longer exclusive to Pixel Buds. Rolling out in beta starting in the US, Mexico, and India, it supports over 70 languages, preserving speaker tone and cadence for natural listening. Early testers praise its seamless integration, marking a bold push toward democratizing AI voice translation and challenging Apple's AirPods-locked equivalent.

Luma AI Drops Ray3 Modify: One-Click Outfit & Scene Swaps on Real Footage — While Locking In Every Nuance of Human Performance

Luma AI unveiled Ray3 Modify on December 18, 2025 — a groundbreaking upgrade to Dream Machine that finally solves AI video's biggest pain: transforming real actor footage with wild scene changes, costume swaps, or even character redesigns, all while preserving original motion, timing, eye lines, and emotional delivery. Powered by precise keyframe controls, character reference locking, and scene-aware fidelity, it turns "shoot once, reimagine forever" into reality. Early adopters are already slashing reshoots by 90%, calling it the hybrid-AI workflow that blends human authenticity with generative magic.

OpenAI Launches ChatGPT App Directory: The Official "App Store" Opens for Developer Submissions, Turning ChatGPT into a True AI Platform

OpenAI officially launched the ChatGPT App Directory on December 17-18, 2025 — a built-in marketplace where users can browse, connect, and invoke third-party apps directly within conversations. Developers can now submit apps for review via the Apps SDK (beta), enabling seamless actions like ordering food via DoorDash, creating playlists on Spotify/Apple Music, or designing in Canva — all without leaving ChatGPT. Early integrations include Zillow, Photoshop, and Slack, with the directory accessible via chatgpt.com/apps. This pivot transforms ChatGPT from chatbot to ecosystem hub, with monetization options (external links for now) and future in-app potential teased.

OpenAI Launches GPT-5.2-Codex: The Most Powerful Agentic Coding Model Yet — Revolutionizing Professional Software Engineering and Cybersecurity

OpenAI released GPT-5.2-Codex on December 18, 2025 — the pinnacle of its agentic coding lineage, a finely-tuned variant of GPT-5.2 optimized for Codex environments. Featuring advanced context compaction for million-token workflows, superior large-scale refactors/migrations, native Windows support, and unprecedented cybersecurity prowess, it outperforms predecessors on SWE-Bench Pro and real-world vuln discovery. Now the default in Codex CLI, IDE extensions, and cloud agents, early adopters report 3x faster complex tasks — solidifying OpenAI's dominance in AI-powered dev tools.

Apple Open-Sources SHARP: The Lightning-Fast AI That Revives 2D Photos as Photorealistic 3D Scenes in Under a Second

Apple Machine Learning Research unveiled SHARP on December 17, 2025 — an open-source breakthrough that reconstructs metric-accurate 3D Gaussian scenes from a single 2D photo in less than one second on a standard GPU. Powered by feedforward neural prediction of millions of Gaussians, it delivers SOTA quality on benchmarks like LPIPS (25-34% improvement) and DISTS (21-43%), while slashing synthesis time by orders of magnitude. Fully open on GitHub and Hugging Face, SHARP unlocks instant 3D for AR, spatial computing, and legacy photo revival — no multi-shot captures required.

Meta Launches SAM Audio: The First Unified Multimodal Model That Isolates Any Sound from Complex Mixtures with Intuitive Prompts

Meta unveiled SAM Audio on December 16, 2025 — the groundbreaking extension of its Segment Anything family into audio, claiming the world's first unified multimodal model for sound separation. It isolates specific sounds like vocals, instruments, or ambient noise using text descriptions, visual clicks in videos, or time-span markings — alone or combined — all in a seamless, prompt-driven workflow. Open-sourced with small, base, and large variants, plus benchmarks and a perception encoder, it's now live on the Segment Anything Playground and Hugging Face, slashing barriers for creators and accelerating innovations in editing, accessibility, and beyond.

OpenAI Upgrades Image Generation: GPT-Image 1.5 Delivers Persistent Editing, 4x Faster Speed, 20% Cheaper — Professional-Grade Images from Text Alone

OpenAI released GPT-Image 1.5 on December 17, 2025 — a major leap in its flagship image generation model that finally solves the "object drift" nightmare in iterative editing while slashing generation time to under 3 seconds per 1024x1024 image. With persistent object memory across edits, 4x overall speed gains, and a 20% price cut across API tiers, this upgrade directly challenges Midjourney v7 and Flux Pro while making DALL·E 3 feel obsolete. Now live in ChatGPT Plus/Pro, API, and Labs, early users report 5x more productive workflows for design, marketing, and concepting.

XREAL Launches XREAL 1S: Self-Developed X1 AI Chip Powers Ultra-Low Latency AR Glasses with Native 3DoF and Real-Time 2D-to-3D Magic

XREAL unveiled the XREAL 1S AR glasses on December 17, 2025 — the first consumer AR device powered by its in-house X1 spatial computing chip. Delivering native 3DoF tracking, an astonishing 3ms motion-to-photon (M2P) latency, and groundbreaking system-level real-time 2D-to-3D conversion, it turns ordinary videos, 2D games, and apps into immersive stereoscopic experiences without extra processing. Priced aggressively against Apple Vision Pro clones, the 1S signals XREAL's vertical integration push, with early hands-on reports calling it "the lightest, most responsive AR wearable yet."

Sugon Unleashes scaleX Supercluster: China's First True 10,000+ Card AI Beast with World-First 640-Card Per Cabinet Density

On December 18, 2025, Sugon (Dawning Information Industry) unveiled the scaleX supercluster at the HAIC2025 conference in Kunshan — China's first physical showcase of a massive-scale AI computing system. Built from 16 scaleX640 ultra-nodes interconnected via proprietary scaleFabric network, it deploys over 10,240 AI accelerator cards for total compute exceeding 5 EFlops. Breakthroughs include the world's first single-cabinet 640-card node with immersion phase-change liquid cooling, 20x density boost, native RDMA 400Gb/s networking with sub-1μs latency, and tiered data optimizations lifting accelerator utilization by 55%. Early deployments signal China's push beyond overseas 2027 roadmaps.

Google Drops Gemini 3 Flash: The High-Speed, Low-Cost Beast That Makes Frontier Intelligence Everyday Magic

Google DeepMind unleashed Gemini 3 Flash on December 17, 2025 — a lightning-fast, ultra-efficient variant of the Gemini 3 family that packs Pro-level reasoning, multimodal mastery, and agentic coding into a model that's up to 3x quicker and a fraction of the cost. Now the default powerhouse in the Gemini app, AI Mode in Search, and developer tools like Antigravity and Vertex AI, it crushes benchmarks while slashing latency, democratizing frontier smarts for billions. Early adopters are already reporting explosive productivity gains, with this "Flash" upgrade poised to eclipse rivals in the race for accessible, scalable AI.

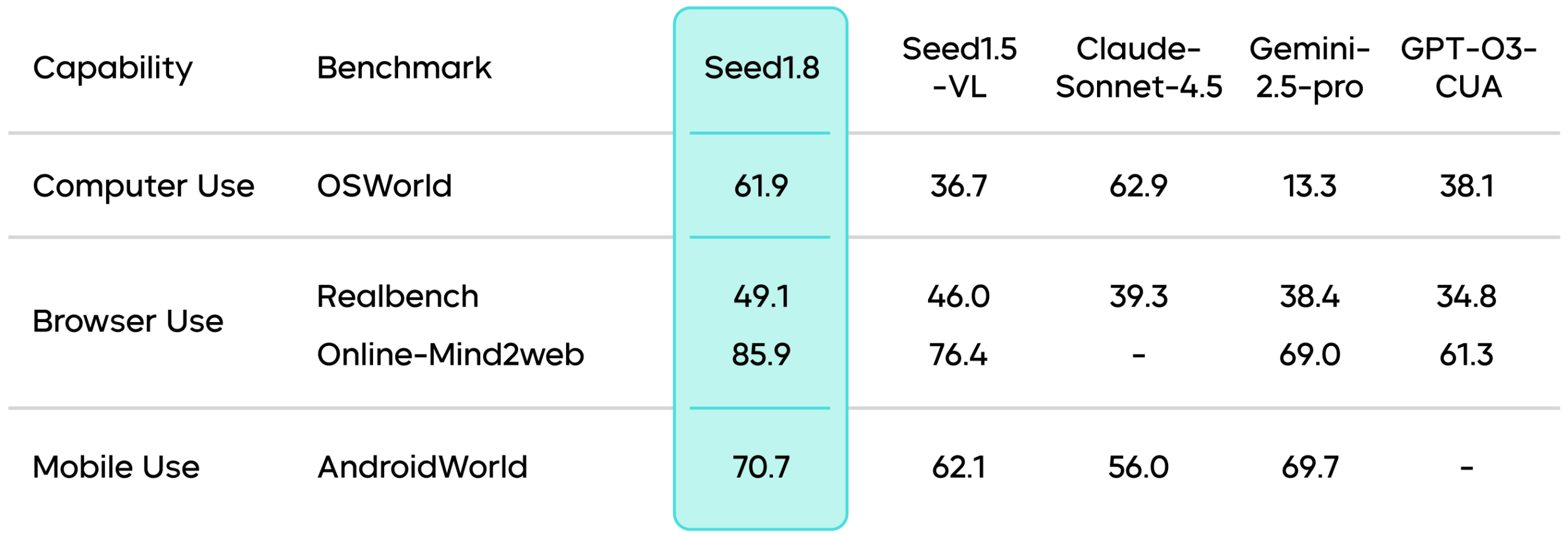

ByteDance Unveils Seed1.8: The Universal Agent Model That Fuses Perception, Reasoning, and Action into One Seamless Brain

ByteDance's Seed Team officially released Seed1.8 on December 17, 2025 — a groundbreaking generalized real-world agent model that integrates core LLM and VLM strengths with multi-turn interaction, tool calling, code execution, and iterative decision-making. Designed as a unified agentic interface, it supports search, GUI navigation, and complex workflows without task-specific pipelines. Now available via the Doubao platform and Volcano Engine API, Seed1.8 pushes multimodal Agent capabilities into the global first tier, with early benchmarks showing superior performance in BrowserComp and agentic tasks.